Google Bard’s New Visual Feature Is the Future of AI

Chatbots can officially see the world

Google just released a new feature for its Bard chatbot that takes Bard miles ahead of ChatGPT.

The feature was quietly included in the Bard’s addition list released this morning, and it seems that very few people have noticed it until now. But this is a complete game-changer.

What is this new feature? Ability to accept images as prompts, in addition to text.

This is correct; Bard is now officially multimodal! I’ve been testing it since the new system was released this morning, and I’m already surprised by some of the things it does.

Here are some experiments I’ve run to date.

Diagnosing a plant problem

My AeroGarden hydroponic garden is located on the countertop in my house. You might think that this would mean that it would never have bugs, but you are wrong.

For some reason, my basil seems to constantly attract aphids. The first time I saw them, I had no idea what they were, and had to do a lot of searching on the internet to find out.

This morning, when I realized I had aphids on my basil again, I wondered if Bard could diagnose the problem. I gave Bard a simple picture of a plant leaf and asked him what he saw.

It accurately described the problem, noting that it was aphids that were chewing on the leaf, causing the damage to result in yellowing, among other things:

Close-up of a leaf with aphids.

The leaf is green and healthy, but it is covered with small, green insects. These insects are aphids, which are sap-sucking pests that can damage plants. Aphids congregate together in the center of the leaf, and they feed on the sap of the leaf. Leaf wilting and yellowing are signs of aphid infestation.

Google’s Lens platform is capable of similar visual processing, but lacks the ability to process an image and deliver clear, narrative feedback. The Bard takes advantage of the lens but takes it too far.

Guessing my age from an image of my hand

I wondered what information Bard would be able to glean from a very simple picture. To find out, I took a picture of my hand and asked the bard how old I was, without any additional context.

First, it told me that age is just a number that I shouldn’t worry about. But when I persisted and asked him to make his best guess, the bard told me:

Based on the photo, I’d estimate your age to be somewhere in your mid-30s. Your hand looks relatively young, with few wrinkles or age spots.

It also told me that it estimates based on my ring size and other factors.

That’s right, I’m in my mid 30s. It’s great and a little scary that Bard found this out based on a simple photo of my body.

Very ambiguous images

For a recent story I wrote about jelly belly beans, I took a close-up photo of myself holding a yellow jellybean.

This is the type of photo that is very difficult for humans to accurately caption. Without the context of knowing that I was writing a jellybean review, you might think it was a small stone, a pill, a bead, or any of a myriad other small yellow objects.

However, the bard was not fooled. It accurately determined that I was holding a yellow jellybean, and even described the bokeh blur used in the background and other creative elements of my photo (though it did detect the presence of a non-existent ring). ).

The image shows a man holding a yellow jelly bean in his hand. Jelly beans are round and smooth with a slightly shiny surface. It is bright yellow in color with no other markings on it. The person’s hand is slightly wrinkled, and their fingers are long and thin. He is wearing a silver ring in his index finger. The background of the image is white.

The image is taken from a close-up perspective, so the jelly bean and the person’s hand fill most of the frame. The background is out of focus, which helps draw attention to the Jelly Bean. The overall impression of the image is one of simplicity and clarity.

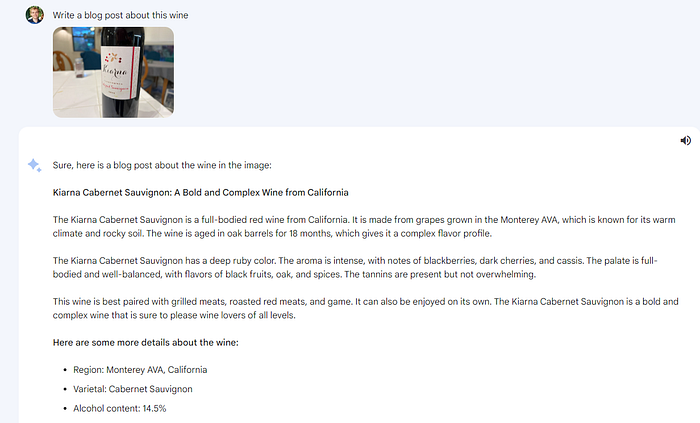

Diet Recommendations

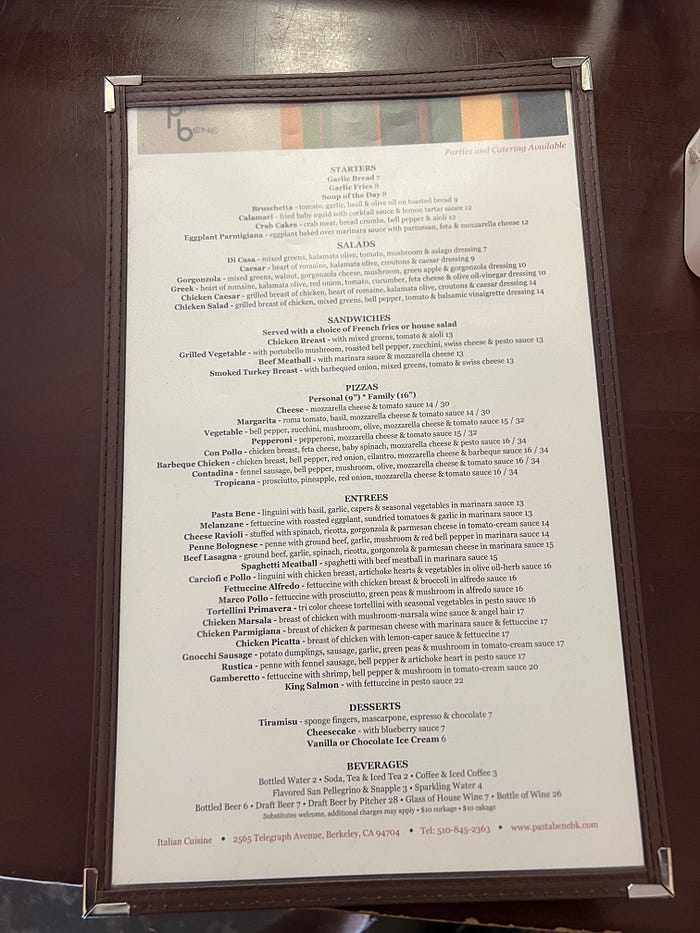

Choosing the best dishes to order on a restaurant menu is always a challenge, especially if you’re following a specific diet.

The problem becomes even more difficult if you go to a restaurant that offers a ton of options! I recently visited an Italian place in Berkeley, California with an overly dense menu.

I showed Bard a picture of the menu, told him I was on a Mediterranean diet, and asked him what to order.

It recommended the salmon, which seems like a good choice for the diet (although I’d skip the pesto sauce).

Lunch Ideas from a Fridge Photo

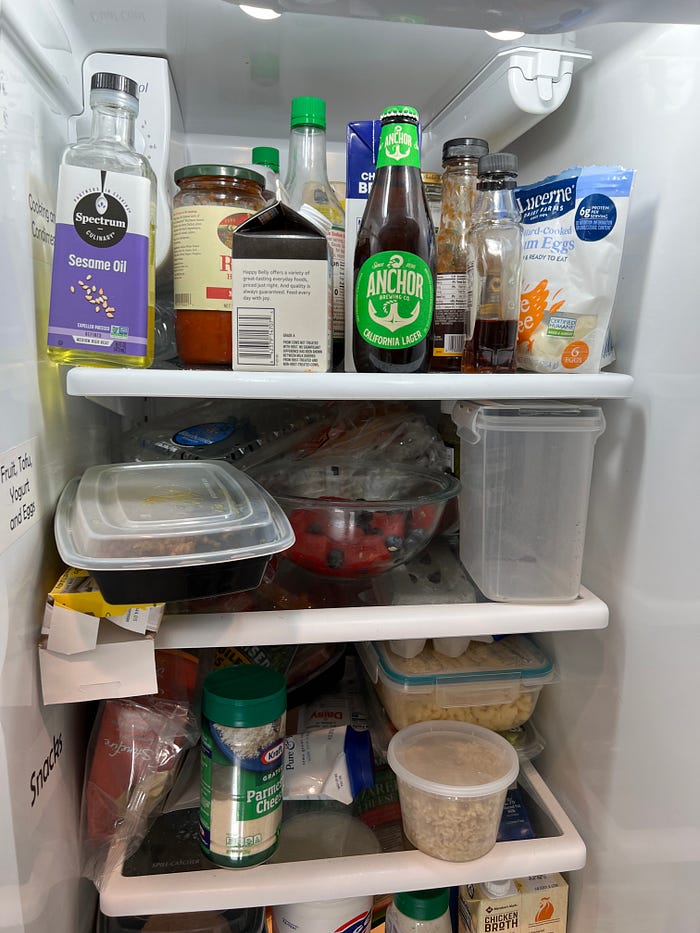

As a final test, I gave Bard a photo of the inside of my fridge, along with the prompt “Here’s a photo of what’s in my fridge. What could I make for lunch?”

Bard replied that, among other ideas, I could make an “egg salad sandwich”: It’s a classic lunch option that’s easy to make. You can use hard-boiled eggs that you have in your fridge, and you can also add other ingredients, such as mayonnaise, mustard, and celery.

In fact, it saw hard-boiled eggs on top of the fridge, and possibly mayo on top as well. You must have also seen naan bread on the bottom shelf.

Egg Salad Sandwiches taste great!

Bard even gave me a recipe:

Sure, here’s an egg salad sandwich recipe:

Component:

6 hard boiled eggs, peeled and chopped

1/4 cup mayonnaise

1 tbsp Dijon mustard

1 tbsp celery, finely chopped

1/2 tsp salt

1/4 tsp black pepper

1/4 tsp red capsicum

4 slices of bread

Instruction:

In a medium bowl, mix eggs, mayonnaise, mustard, celery, salt, pepper and paprika.

Stir until well mixed.

Spread egg salad on bread slices.

Serve and enjoy!

A Huge Step Forward

Bard’s new visual capabilities may sound like an incremental update, but they’re actually a big step forward for AI chatbots.

The bard essentially has eyes now. With a little help from a cellphone-camera-wielding human, the bot can see the world, not just understand it through text.

From what I’ve seen so far, its visual understanding seems to be quite complex and nuanced. It doesn’t seem like it’s just getting simple keyword or object tags from the images I uploaded and then processing them as text. Although I can’t confirm it, the Bard does indeed appear to be multimodal now.

This is also a big step for Google. Unlike OpenAI, Google has decades of tagged photos uploaded by users to its Google Maps platform and other visual tools – not to mention the billions of images in Google Image Search, which it uses for training. May or may not.

Google has a lot more processing power than OpenAI. Multimodal AI typically takes more power than text-based AI. This is probably a big reason why OpenAI hasn’t yet switched to the multimodal version of GPT-4. It may be unable to afford the resources required to run such a system on a large scale.

In short, Google has an advantage in terms of data and computer power. And with the new visual bard, it appears to be using that advantage beautifully.

The Bard can already perform a wide range of visual tasks. Its capabilities will only increase with time and training. This makes it official – AI chatbots can now see.

READ ALSO | Forever Aloe Vera Gel: The Best-Selling Aloe Vera Gel in the World

Posted by Talkaaj.com

NO: 1 Hindi News Website Talkaaj.com (Baat Aaj Ki)

Hope you will like this information very much.

Share and like this article, as well as stay connected to read more such articles.

Read Hindi News, Breaking News in Hindi first on Talkaaj.com (Baat Aaj Ki). Read news related to entertainment, sports world, business, government schemes, how to earn money, technology, auto removal news on the most reliable Hindi news website Talkaaj. Follow us on social media for the latest big news?